Writing performant code is crucial, to ensure that our code is optimized for high-traffic conditions, we must utilize a number of tactics and best practices.

Having a solid understanding of how the query works is essential, when querying the database in WordPress, you should generally use a WP_Query object. WP_Query objects take a number of useful arguments and do things behind-the-scenes that other database access methods such as get_posts() do not.

The more specific you can be with your queries, the less work you will be demanding from your SQL database. This means there are a vast number of possibilities for managing database queries.

Note: using plugins to speed up your site you lose control of the real performance of your website, and how the code works (or not works like it should). If you use full page caching, you lose the sense of what the real response time of your site is, and how good your code works. Experience shows that the lack of focus on the real performance is the main reason why sites crash on days like Black Friday, or when distributing large campaigns. To avoid these problems you just need to write good code and queries, and keep in mind that the solution must be able to scale.

Note: It should be possible to turn off Full Page Caching on a regular day, with normal traffic, without getting nervous. And if you do things right, you should be able to turn off Full Page Caching on days with a lot of traffic too – without crashing your website.

Here are a few highlights:

A new WP_Query object runs five queries by default, including calculating pagination and priming the term and meta caches. By using these parameters and passing values as FALSE, we can make the query faster by stopping some extra database queries being executed.

'no_found_rows' => true // useful when pagination is not needed.

'update_post_meta_cache' => false // useful when post meta will not be utilized.

'update_post_term_cache' => false // useful when taxonomy terms will not be utilized.

'fields' => 'ids' // useful when only the post IDs are needed (less typical).Note: We should not use these parameters always as adding things to the cache is the right thing to do, however these may be useful in specific circumstances and you should consider using when you know what you are doing.

Do not use:

'posts_per_page' => -1 // get allThis is a performance risk. What if we have 100,000 posts? This could crash the site, better determine a reasonable upper limit for your situation.

// Query for 500 posts.

new WP_Query( array(

'posts_per_page' => 500

));Do not use $wpdb or get_posts() unless you have good reason, get_posts() actually calls WP_Query, but calling get_posts() directly bypasses a number of filters by default. Not sure whether you need these things or not? You probably don’t.

If you don’t plan to paginate query results, always pass no_found_rows => true to WP_Query.

This will tell WordPress not to run SQL_CALC_FOUND_ROWS on the SQL query, drastically speeding up your query. SQL_CALC_FOUND_ROWS calculates the total number of rows in your query which is required to know the total amount of “pages” for pagination.

// Skip SQL_CALC_FOUND_ROWS for performance (no pagination).

new WP_Query( array(

'no_found_rows' => true,

));Avoid using post__not_in, while simple, this is not good! The WP_Query arguments post__not_in appears to be a helpful option, but it can lead to poor performance on a busy and/or large site due to affecting the cache hit rate.

Problems with this approach:

Let’s say you want the last posts but want to exclude some post from the list.

// Display the most recent news posts (but not the current one)

function weszty_web_my_recent_posts( $exclude = array() ) {

$args = array(

'category_name' => 'news',

'posts_per_page' => 5,

'post_status' => 'publish',

'ignore_sticky_posts' => true,

'no_found_rows' => true,

'post__not_in' => $exclude,

);

$recent_posts = new WP_Query( $args );

echo '<div class="most-recent-news"><h1>News</h1>';

while ( $recent_posts->have_posts() ) {

$recent_posts->the_post();

the_title( '<h2><a href="' . get_permalink() . '">', '</a></h2>' );

}

echo '</div>';

wp_reset_postdata();

}The query, which was previously leveraging the built-in query cache, is now unique for every post or page due to the added AND ID not in ( '12345' ). This is due to the cache key (which is a hash of the arguments) now including a list of at least one ID, and that is different across all posts. So instead of subsequent pages obtaining the list of 5 posts from object cache, it will miss the cache, and the same work is being done by the database on multiple pages.

As a result, each of those queries is now being cached separately, increasing the use of cache unnecessarily. For a site with hundreds of thousands of posts, this will potentially impact the size of object cache and result in premature cache evictions.

What to do instead?

In almost all cases, you can gain great speed improvements by requesting more posts and skipping the excluded posts in PHP.

You can improve performance by ensuring the same post query being used is consistent across all the URLs, and just retrieve the most recent 6 posts, so that it’s retrieved from object cache. If you anticipate the $exclude list to be larger than one post, then set the limit higher, perhaps to 10. Make it a fixed number, not variable, to reduce the number of cache variants.

The updated function no longer excludes the post(s) in SQL, it uses conditionals in the loop:

// Display the most recent posts (but not the current one)

function weszty_web_my_recent_posts( $exclude = array() ) {

$args = array(

'category_name' => 'news',

'posts_per_page' => 10,

'post_status' => 'publish',

'ignore_sticky_posts' => true,

'no_found_rows' => true,

);

$recent_posts = new WP_Query( $args );

echo '<div class="most-recent-news"><h1>News</h1>';

$posts = 0; // count the posts displayed, up to 5

while ( $recent_posts->have_posts() && $posts < 5 ) {

$recent_posts->the_post();

$current = get_the_ID();

if ( ! in_array( $current, $exclude ) ) {

$posts++;

the_title( '<h2><a href="' . get_permalink() . '">', '</a></h2>' );

}

}

echo '</div>';

wp_reset_postdata();

}This approach, while requiring a tiny bit of logic in PHP, leverages the query cache better, and avoids creating many cache variations that might impact the site’s scalability and stability.

Post meta lets us store unique information about specific posts. As such the way post meta is stored does not facilitate efficient post lookups. Generally, looking up posts by post meta should be avoided (sometimes it can’t). If you have to use one, make sure that it’s not the main query and that it’s cached.

Build arrays that encourage lookup by key instead of search by value:

in_array() is not an efficient way to find if a given value is present in an array. The worst case scenario is that the whole array needs to be traversed, thus making it a function with O(n) complexity. VIP review reports in_array() use as an error, as it’s known not to scale.

The best way to check if a value is present in an array is by building arrays that encourage lookup by key and use isset(). isset() uses an O(1) hash search on the key and will scale.

In case you don’t have control over the array creation process and are forced to use in_array(), to improve the performance slightly, you should always set the third parameter to true to force use of strict comparison.

Multi-dimensional queries should be avoided.

Examples of multi-dimensional queries include:

* Querying for posts based on terms across multiple taxonomies

* Querying multiple post meta keys// Query for posts with both a particular category and tag.

new WP_Query( array(

'category_name' => 'cat-slug',

'tag' => 'tag-slug',

));Each extra dimension of a query joins an extra database table. Instead, query by the minimum number of dimensions possible and use PHP to filter out results you don’t need.

Taxonomy queries should set include_children to false

If the term is shared it will not include the children term. If the term is not shared, it will include the children.

As of WordPress 4.4, terms have been split, which means that this now adds 'include_children' => true to almost all taxonomy queries.

This can have a very significant performance impact on code that was performant previously and in some cases, queries that used to return almost instantly will time out. Therefore, we now recommend 'include_children' => false to be added to all taxonomy queries when possible.

WP_Query vs. get_posts() vs. query_posts()

As outlined above, get_posts() and WP_Query, apart from some slight nuances, are quite similar. Both have the same performance cost (minus the implication of skipping filters): the query performed.

query_posts(), on the other hand, behaves quite differently than the other two and should almost never be used. Specifically:

- It creates a new

WP_Queryobject with the parameters you specify. - It replaces the existing main query loop with a new instance of

WP_Query.

As noted in the WordPress Docs , query_posts() isn’t meant to be used by plugins or themes. Due to replacing and possibly re-running the main query, query_posts() is not performant and certainly not an acceptable way of changing the main query.

Database queries

Direct database queries should be avoided wherever possible, and WordPress API functions should be used instead for fetching and manipulating data.

Disable the posts_groupby filter.

The posts_groupby filter adds the ability to run GROUP BY on the returned array of posts. In most cases, this is not necessary. To disable, pass WordPress’ __return_false() function to the add filter:

add_filter( 'posts_groupby', '__return_false' );And to reset after your query:

remove_filter( 'posts_groupby', '__return_false' );If WordPress API functions cannot be used, and direct queries are required, follow these best practices:

- Use filters to adjust queries when needed. Filters such as

posts_wherecan help adjust the default queries done by WP_Query. This helps keep code compatible with other plugins. Many filters are available to hook into inside/wp-includes/query.php. - Make sure that all queries are protected against SQL injection by making use of

$wpdb->prepareand other escaping functions likeesc_sqlandesc_like. - Avoid cross-table queries, especially queries that could contain huge datasets (e.g. negating taxonomy queries like the

-catoption to exclude posts of a certain category). These queries can cause a huge load on the database servers. - Though many operations can be made on the database side, code will scale much better by keeping database queries simple and performing necessary calculations and logic in PHP.

- Avoid using

DISTINCT,GROUP, or other query statements that cause the generation of temporary tables to deliver the results. - Be aware of the amount of data that is requested. Include defensive limits.

- When creating queries in a development environment, examine the queries for performance issues using the

EXPLAINstatement. Confirm that indexes are being used. - Cache the results of queries where it makes sense.

- Avoid queries that only use the

meta_valuefield. By default, WordPress’ postmeta table comes with an index onmeta_key(but notmeta_value). - You can try to add an index on

meta_key+meta_value, to use this index, calledweszty_meta_key_value, queries must include both key and value in theWHEREclause. - Some

meta_valuequeries can be transformed into taxonomy queries. For example, instead of using ameta_valueto filter if a post should be shown to visitors with membership level “Premium”, use a custom taxonomy and a term for each of the membership levels in order to leverage the indexes. - When

meta_valueis set as a binary value (e.g. “hide_on_homepage” = “true”), MySQL will look at every single row that has themeta_key“hide_on_homepage” in order to check for themeta_value“true”. The solution is to change this so that the mere presence of themeta_keymeans that the post should be hidden on the homepage. If a post shouldn’t be hidden on the homepage, simply delete the “hide_on_homepage”meta_key. This will leverage themeta_keyindex and can result in large performance gains. - In a non-binary situation instead of setting

meta_keyequal to “primary_category” andmeta_valueequal to “sports“, you can setmeta_keyto “primary_category_sports“. - To prevent an extra

JOIN, set thepost_typeparameter explicitly: ‘post_type’ => ‘post’ - Use

tax_queryovermeta_querywhen searching for posts. Unlike taxonomies, postmeta values do not have indexes.meta_querysearches through the entire wp_postmeta table which results in slow queries.

Caching

Caching is the act of storing computed data for later use, and it is an important concept in WordPress. There are different ways to employ caching, and often multiple methods will be used.

The “Object Cache”

The act of storing data or objects for later use is known as object caching. Objects in WordPress are cached in memory so that they can be retrieved quickly.

In WordPress, the object cache functionality provided by WP_Object_Cache, and the Transients API are great solutions for improving performance on long-running queries, complex functions, or similar.

On a regular WordPress install, the difference between transients and the object cache is that transients are persistent and would write to the options table, while the object cache only persists for the particular page load.

It is possible to create a transient that will never expire by omitting the third parameter, this should be avoided as any non-expiring transients are autoloaded on every page and you may actually decrease performance by doing so.

On environments with a persistent caching mechanism (i.e. Memcache, Redis, or similar) enabled, the transient functions become wrappers for the normal WP_Object_Cache functions. The objects are identically stored in the object cache and will be available across page loads.

Note: as the objects are stored in memory, you need to consider that these objects can be cleared at any time and that your code must be constructed in a way that it would not rely on the objects being in place.

This means you always need to ensure you check for the existence of a cached object and be ready to generate it in case it’s not available. Here is an example:

/**

* Retrieve top 10 most-commented posts and cache the results.

*

* @return array|WP_Error Array of WP_Post objects with the highest comment counts,

* WP_Error object otherwise.

*/

function weszty_web_get_top_commented_posts() {

// Check for the top_commented_posts key in the 'top_posts' group.

$top_commented_posts = wp_cache_get( 'weszty_web_top_commented_posts', 'top_posts' );

// If nothing is found, build the object.

if ( false === $top_commented_posts ) {

// Grab the top 10 most commented posts.

$top_commented_posts = new WP_Query( 'orderby=comment_count&posts_per_page=10' );

if ( ! is_wp_error( $top_commented_posts ) && $top_commented_posts->have_posts() ) {

// Cache the whole WP_Query object in the cache and store it for 5 minutes (300 secs).

wp_cache_set( 'weszty_web_top_commented_posts', $top_commented_posts->posts, 'top_posts', 5 * MINUTE_IN_SECONDS );

}

}

return $top_commented_posts;

}In the above example, the cache is checked for an object with the 10 most commented posts and would generate the list in case the object is not in the cache yet. Generally, calls to WP_Query other than the main query should be cached.

As the content is cached for 300 seconds, the query execution is limited to one time every 5 minutes, which is nice.

However, the cache rebuild in this example would always be triggered by a visitor who would hit a stale cache, which will increase the page load time for the visitors and under high-traffic conditions. This can cause race conditions when a lot of people hit a stale cache for a complex query at the same time. In the worst case, this could cause queries at the database server to pile up causing replication, lag, or worse.

That said, a relatively easy solution for this problem is to make sure that your users would ideally always hit a primed cache. To accomplish this, you need to think about the conditions that need to be met to make the cached value invalid. In our case this would be the change of a comment.

The easiest hook we could identify that would be triggered for any of this actions would be wp_update_comment_count set as do_action( 'wp_update_comment_count', $post_id, $new, $old ).

With this in mind, the function could be changed so that the cache would always be primed when this action is triggered. Here is how it’s done:

/**

* Prime the cache for the top 10 most-commented posts.

*

* @param int $post_id Post ID.

* @param int $new The new comment count.

* @param int $old The old comment count.

*/

function weszty_web_refresh_top_commented_posts( $post_id, $new, $old ) {

// Force the cache refresh for top-commented posts.

weszty_web_get_top_commented_posts( $force_refresh = true );

}

add_action( 'wp_update_comment_count', 'weszty_web_refresh_top_commented_posts', 10, 3 );

/**

* Retrieve top 10 most-commented posts and cache the results.

*

* @param bool $force_refresh Optional. Whether to force the cache to be refreshed. Default false.

* @return array|WP_Error Array of WP_Post objects with the highest comment counts, WP_Error object otherwise.

*/

function weszty_web_get_top_commented_posts( $force_refresh = false ) {

// Check for the top_commented_posts key in the 'top_posts' group.

$top_commented_posts = wp_cache_get( 'weszty_web_top_commented_posts', 'top_posts' );

// If nothing is found, build the object.

if ( true === $force_refresh || false === $top_commented_posts ) {

// Grab the top 10 most commented posts.

$top_commented_posts = new WP_Query( 'orderby=comment_count&posts_per_page=10' );

if ( ! is_wp_error( $top_commented_posts ) && $top_commented_posts->have_posts() ) {

// In this case we don't need a timed cache expiration.

wp_cache_set( 'weszty_web_top_commented_posts', $top_commented_posts->posts, 'top_posts' );

}

}

return $top_commented_posts;

}With this implementation, you can keep the cache object forever and don’t need to add an expiration for the object as you would create a new cache entry whenever it is required. Just keep in mind that some external caches (like Memcache) can invalidate cache objects without any input from WordPress.

For that reason, it’s best to make the code that repopulates the cache available for many situations.

In some cases, it might be necessary to create multiple objects depending on the parameters a function is called with. In these cases, it’s usually a good idea to create a cache key which includes a representation of the variable parameters. A simple solution for this would be appending an md5 hash of the serialized parameters to the key name.

// Here is your string of inputs

$args = 'value-1=value&value-2=250000';

// Create a unique hashed key from the arguments

$key = md5( $args );

//$key = md5( json_encode( $args ) ); // If $args is an array

// Set the transient name

$transient_name = 'custom_input_' . $key;

if ( false === ( $query = get_transient( $transient_name ) ) ) {

$query = get_posts( $args . '&fields=ids' ) // Only get post ids. Alter if $args is an array

// Set the transient only if we have results

if ( $query )

set_transient(

$transient_name, // Transient name

$query, // What should be saved

7 * DAY_IN_SECONDS // Lifespan of transient is 7 days

);

}We can then just flush all transients when we publish a new post, deletes it, undelete or update a post:

add_action( 'transition_post_status', function () {

global $wpdb;

$wpdb->query( "DELETE FROM $wpdb->options WHERE `option_name` LIKE ('_transient%_custom_input_%')" );

$wpdb->query( "DELETE FROM $wpdb->options WHERE `option_name` LIKE ('_transient_timeout%_custom_input_%')" );

});Page Caching

Page caching in the context of web development refers to storing a requested location’s entire output to serve in the event of subsequent requests to the same location.

There are many plugins for this type of cache: LSCache, W3TC, SuperCache, etc. These plugins are used to prevent a flood of traffic from breaking your site. It does this by serving old (5 minute max age by default, but adjustable) pages to new users.

This reduces the demand on the web server CPU and the database. It also means some people may see a page that is a few minutes old. However, this only applies to people who have not interacted with your website before. Once they have logged-in or left a comment, they will always get fresh pages.

Although these plugins have a lot of benefits, it also have a couple of code design requirements:

- As the rendered HTML of your pages might be cached, you cannot rely on server side logic related to

$_SERVER,$_COOKIEor other values that are unique to a particular user. - You can however implement cookie or other user based logic on the front-end (e.g. with JavaScript)

AJAX Endpoints

AJAX stands for Asynchronous JavaScript and XML. Often, we use JavaScript on the client-side to ping endpoints for things like infinite scroll.

WordPress provides an API to register AJAX endpoints on wp-admin/admin-ajax.php. However, WordPress does not cache queries within the administration panel for obvious reasons. Therefore, if you send requests to an admin-ajax.php endpoint, you are bootstrapping WordPress and running un-cached queries. Used properly, this is totally fine. However, this can take down a website if used on the frontend.

For this reason, front-facing endpoints should be written by using the Rewrite Rules API and hooking early into the WordPress request process.

Here is a simple example of how to structure your endpoints:

/**

* Register a rewrite endpoint for the API.

*/

function weszty_web_add_api_endpoints() {

add_rewrite_tag( '%api_item_id%', '([0-9]+)' );

add_rewrite_rule( 'api/items/([0-9]+)/?', 'index.php?api_item_id=$matches[1]', 'top' );

}

add_action( 'init', 'weszty_web_add_api_endpoints' );

/**

* Handle data (maybe) passed to the API endpoint.

*/

function weszty_web_do_api() {

global $wp_query;

$item_id = $wp_query->get( 'api_item_id' );

if ( ! empty( $item_id ) ) {

$response = array();

// Do stuff with $item_id

wp_send_json( $response );

}

}

add_action( 'template_redirect', 'weszty_web_do_api' );Cache Remote Requests

Requests made to third-parties, whether synchronous or asynchronous, should be cached. Not doing so will result in your site’s load time depending on an unreliable third-party!

Here is a quick code example for caching a third-party request:

/**

* Retrieve posts from another blog and cache the response body.

*

* @return string Body of the response. Empty string if no body or incorrect parameter given.

*/

function weszty_web_get_posts_from_other_blog() {

if ( false === ( $posts = wp_cache_get( 'weszty_web_other_blog_posts' ) ) ) {

$request = wp_remote_get( ... );

$posts = wp_remote_retrieve_body( $request );

wp_cache_set( 'weszty_web_other_blog_posts', $posts, '', HOUR_IN_SECONDS );

}

return $posts;

}weszty_web_get_posts_from_other_blog() can be called to get posts from a third-party and will handle caching internally.

Data Storage

Utilizing built-in WordPress APIs we can store data in a number of ways.

There are a number of performance considerations for each WordPress table:

1) Options – The options API is a simple key-value storage system backed by a MySQL table. This API is meant to store things like settings and not variable amounts of data.

Site performance, especially on large websites, can be negatively affected by a large options table. It’s recommended to regularly monitor and keep this table under 500 rows. The “autoload” field should only be set to ‘yes’ for values that need to be loaded into memory on each page load.

Caching plugins can also be negatively affected by a large wp_options table. Popular caching plugins such as Memcached place a 1MB limit on individual values stored in cache. A large options table can easily exceed this limit, severely slowing each page load.

2) Post Meta or Custom Fields – Post meta is an API meant for storing information specific to a post. For example, if we had a custom post type, “Product”, “serial number” would be information appropriate for post meta. Because of this, it usually doesn’t make sense to search for groups of posts based on post meta.

3) Taxonomies and Terms – Taxonomies are essentially groupings (categories). If we have a classification that spans multiple posts, it is a good fit for a taxonomy term. For example, if we had a custom post type, “Car”, “Nissan” would be a good term since multiple cars are made by Nissan. Taxonomy terms can be efficiently searched across as opposed to post meta.

4) Custom Post Types – WordPress has the notion of “post types”. “Post” is a post type which can be confusing. We can register custom post types to store all sorts of interesting pieces of data. If we have a variable amount of data to store such as a product, a custom post type might be a good fit.

While it is possible to use WordPress’ Filesystem API to interact with a huge variety of storage endpoints, using the filesystem to store and deliver data outside of regular asset uploads should be avoided as this methods conflict with most modern / secure hosting solutions.

Database Writes

Writing information to the database is at the core of any website you build. Here are some tips:

- Generally, do not write to the database on frontend pages as doing so can result in major performance issues and race conditions.

- When multiple threads (or page requests) read or write to a shared location in memory and the order of those read or writes is unknown, you have what is known as a race condition.

- Store information in the correct place.

- Certain options are “autoloaded” or put into the object cache on each page load. When creating or updating options, you can pass an

$autoloadargument toadd_option(). If your option is not going to get used often, it shouldn’t be autoloaded. As of WordPress 4.2,update_option()supports configuring autoloading directly by passing an optional$autoloadargument. Using this third parameter is preferable to using a combination ofdelete_option()andadd_option()to disable autoloading for existing options.

Elasticsearch

Consider using Elasticsearch instead of MySQL if you don’t have too many distinct meta keys.

The combination of using Elasticsearch on your site (regardless of whether it’s for a particular query or just in general) and having multiple distinct meta_keys (e.g. non-binary situations) could potentially cause severe performance problems based on the way Elasticsearch stores the data and not how it queries the data.

Hands-on Examples for using transients in your Theme or Plugin

WordPress isn’t architected to serve fragments of pages by default, though it can be customized to do so. Fragment Caching means storing a result (HTML output) so that it can be delivered much faster later.

You can choose what elements to cache, and you don’t need to cache the full output – like Full Page Caching.

My approach was to make a wrapper function, that would fetch the template from the transient cache if found, and only if not found it would actually render the partial, store the output using the PHP output buffer and then echo out the partial’s contents that was either just generated or fetched from the cache. The inline code comments explain each step in detail.

/*

* Wrapper for get_template_part to get the ready-rendered

* template from the WP Transient cache is exists.

*

* Note! This should be only used for static pages that always

* have the same contents, or at least can be so for an hour

* (which is the current cache expiry time).

*/

function weszty_web_template_cache( $template_path, $template_name ) {

if ( wp_is_mobile() ) {

$cache_key = 'weszty-partials-'. $template_name .'_mobile';

} else {

$cache_key = 'weszty-partials-'. $template_name;

}

if ( ! $output = get_transient( $cache_key ) ) {

ob_start();

get_template_part( $template_path, $template_name );

$output = ob_get_clean();

// You can minify the $output right here, before saving

set_transient( $cache_key, $output, HOUR_IN_SECONDS );

}

echo $output;

}WordPress constants created especially for this case:

MINUTE_IN_SECONDS (60)

HOUR_IN_SECONDS (3600)

DAY_IN_SECONDS (86400)

WEEK_IN_SECONDS (604800)

YEAR_IN_SECONDS (31536000)It looks something like this (I use shortcodes, but I think this is a better way to visualize):

get_header();

get_template_part( 'partials/fp', 'hero' );

weszty_web_template_cache( 'partials/fp', 'features' );

weszty_web_template_cache( 'partials/fp', 'segment-section' );

weszty_web_template_cache( 'partials/fp', 'products' );

weszty_web_template_cache( 'partials/fp', 'customers' );

weszty_web_template_cache( 'partials/fp', 'blog-section' );

get_template_part( 'partials/fp', 'cta-section' );

get_template_part( 'partials/tech', 'logos-section' );

get_footer();Speed Up WordPress Navigation Menus

Whenever a WordPress nav menu is outputted (using the core function wp_nav_menu( $args )), a complex function using multiple queries is executed. We can definitely speed that up a lot! Take a look at the following function that you can easily integrate into your own theme: Just replace the calls to wp_nav_menu with a call to this function:

function weszty_web_nav_menu( $theme_location, $class = 'menu' ) {

$menu = get_transient( 'nav-' . $theme_location );

if( $menu === false ) {

if( has_nav_menu( $theme_location ) ) {

$menu = wp_nav_menu( array(

'theme_location' => $theme_location,

'menu_class' => $class,

'echo' => 0 // or just use the buffer

) );

set_transient( 'nav-' . $theme_location, $menu, WEEK_IN_SECONDS );

}

}

echo $menu;

}The only thing a little more complicated is figuring out all the places in the code that contain adjustments which would affect the content that is stored in the transient. For a navigation menu, this is not too hard – including the code below in your theme will do the trick.

function weszty_web_invalidate_nav_cache( $id ) {

$locations = get_nav_menu_locations();

if( is_array( $locations ) && $locations ) {

$locations = array_keys( $locations, $id );

if( $locations ) {

foreach( $locations as $location ) {

delete_transient( 'nav-' . $location );

}

}

}

}

add_action( 'wp_update_nav_menu', 'weszty_web_invalidate_nav_cache' );Now, we are caching the rendered HTML output! No matter how many extra SQL queries are needed to generate the HTML, they are run only once, and the complete HTML is cached. Until the cache expires, we are always displaying HTML rendered without the need for any additional SQL queries or processing.

Conclusion

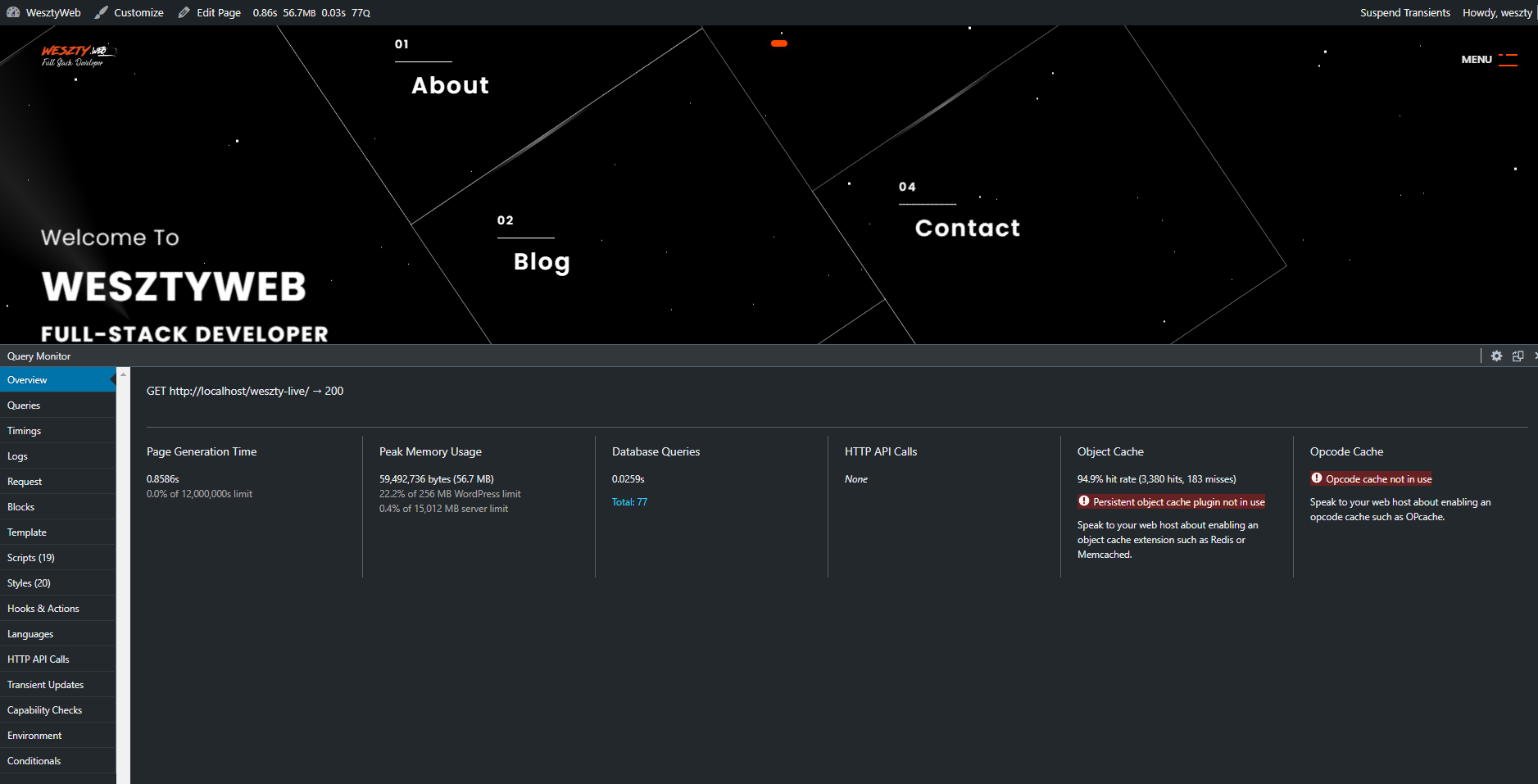

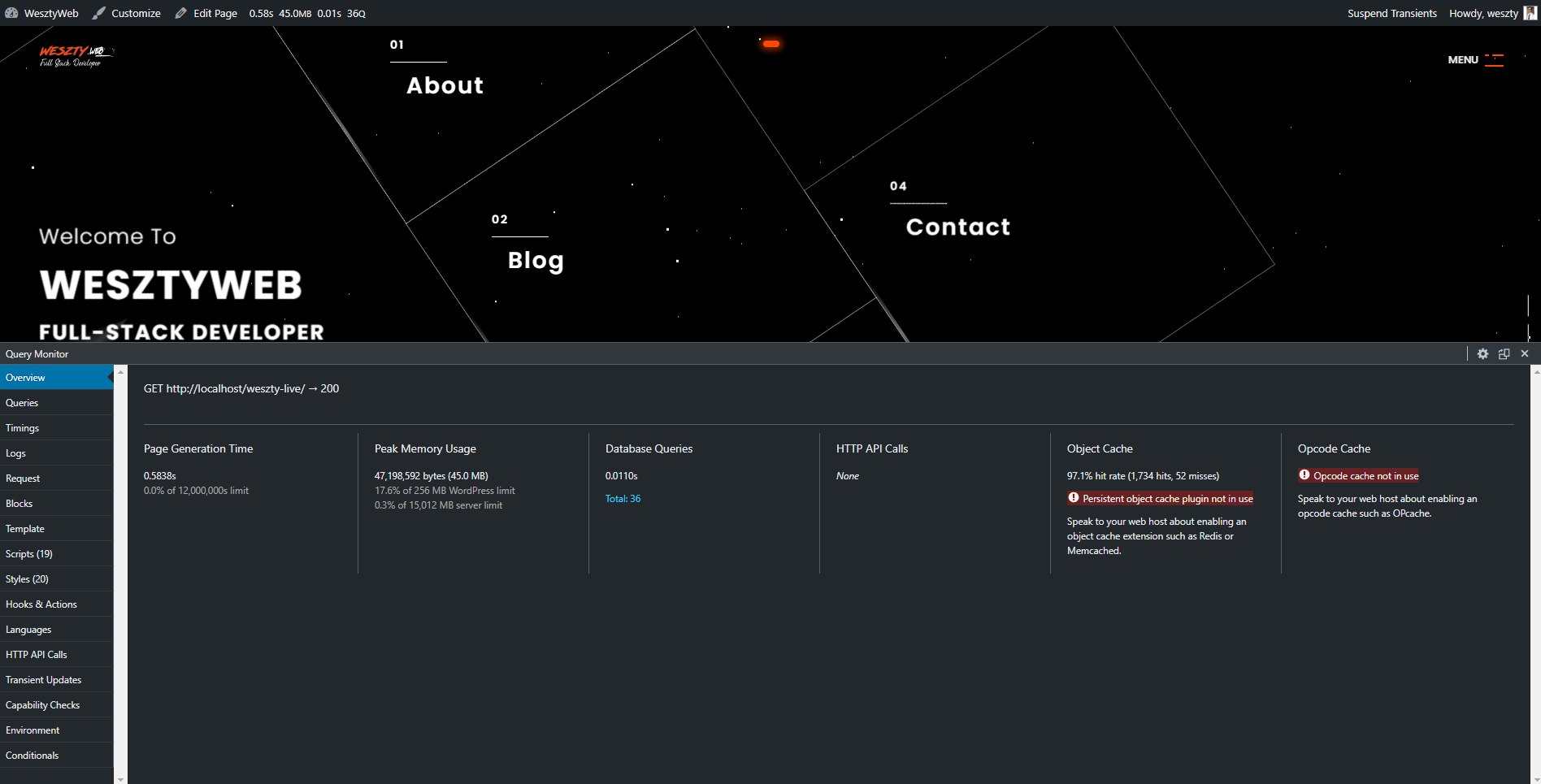

As you can see, implementing the above methods could significantly improve the performance of your theme/plugin. If a user of your theme/plugin decides to use a specialized caching plugin, all the better, but make sure that your code is optimized(I use LScache above all this).